Let’s be honest your fraud and risk leaders already know, but they would rarely say it loud hiring more analysts and tuning models are the two most common responses to alert overload, and neither one gets to the real problem. The queue fills back up. The pressure returns. The team is still catching up. Because the fix was never where the problem actually lived.

Analysts spend the first chunk of every investigation doing work that should have been done before the alert was ever raised. They pull device logs, cross-reference sessions, check account history, reconcile what three separate systems flagged and none of that is actual risk assessment. It's just catching up.

When leadership asks why throughput is down, "we're overwhelmed with alerts" sounds like a staffing complaint. It isn't, it's a systems problem that has learned to look like a human problem.

{{cta-1}}

The Architecture Built This. The Architecture Has to Fix It.

Risk infrastructure doesn't arrive fragmented. It gets that way over time.

Transaction monitoring came first. Then device intelligence, behavioural analytics, third party feeds, case management. Each tool was a reasonable response to a specific threat at a specific moment. No one sat down and designed the mess intentionally. But the result is a stack where every system scores its own slice of reality and escalates on its own terms.

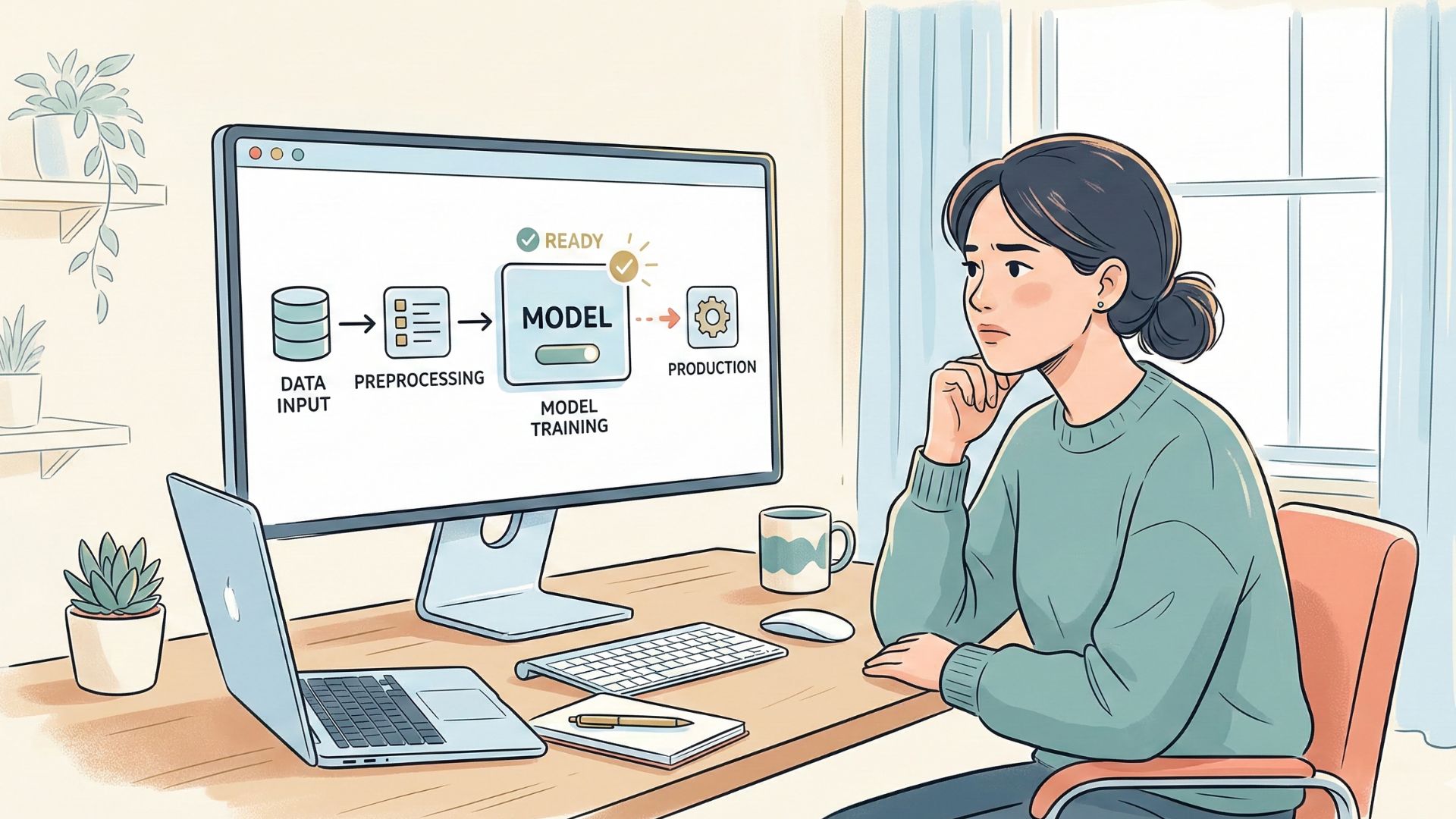

Identity isn't resolved in real time. Risk gets assessed per event, so one customer action generates three alerts each technically valid, none of them telling the whole story.

Analysts open a case and the first task wasn’t making a decision. It's reconstruction. Javelin Strategy research puts this at nearly40% of investigation time context gathering that should have been assembled upstream, before any human was involved. That time doesn't come back by hiring more people, it comes back by fixing what the system should have been doing in the first place.

What Gets Missed When Fragmentation Is Treated Normal

The operational drag is easy enough to see. What's harder to notice is what it does to the people carrying it over months?

Chronic alert overload changes how analysts work. In high volume environments where most alerts turn out to be noise, the instinct naturally shifts from "what is this actually?" toward "how do I get through this queue?" That's not a character flaw. It's a rational adaptation to an unreasonable system.

Fraudsters rarely move in single events. They test,rotate devices, probe sessions, then execute. When those signals aren't connected upstream, each step looks like a minor anomaly in isolation. By the time the third alert reaches someone's queue, the transaction is often already done.

A 2023 Aite-Novarica report estimated that false declines cost U.S. merchants over $50 billion annually more than actual fraud losses because systems couldn't reliably separate real risk from disconnected signals firing out of context. Alert fatigue has a balance sheet impact. It just rarely shows up on the right line.

{{cta-2}}

The Objection Worth Taking Seriously

The most common pushback on upstream correlation is latency. If you hold signals to assemble context before scoring, you introduce delay and in fraud prevention and delay is itself a risk.

But modern streaming infrastructure has changed the practical reality of that trade off considerably. Real time identity resolution, event stream correlation at ingestion, episode level scoring, these aren't slow batch processes anymore. When the architecture is designed for it, context assembly happens in milliseconds. The latency cost of correlating signals is genuinely smaller than the accuracy cost of operating without that context.

The more useful question isn't whether you can absorb the latency of correlation. It's whether you can keep absorbing the false decline rates, investigation overhead, and fraud losses that fragmentation is producing right now.

What Changes When Signals Connect Before Escalation

The scenario most teams know well: a high value transfer comes in from a new device mid-session. Transaction monitoring fires an alert. Device intelligence flags the hardware. Behavioural analytics picks up session anomalies. Three alerts enter three separate queues. One analyst closes the case on amount thresholds. Another escalates the device flag. The third sits waiting and fraudster completes the transfer before anyone has the full picture in front of them.

In an architecture where signals are correlated before escalation, that same sequence produces a different outcome. Identity, device, and behavioural signals come together before scoring. The episode — new device, inconsistent session, high value transfer surfaces as a single coherent case. One analyst sees the complete picture and makes a clear, informed call.

The volume problem largely resolves itself once the correlation problem is solved properly. Teams don't need to move faster. The system needs to connect the dots before it raises its hand.

Parkar's POV

At Parkar, we treat alert fatigue as a design signal rather than an operational one.

When platforms generate alerts from fragmented systems and expect analysts to piece together identity, behaviour, and history after the fact, the burden has landed in the wrong place.

Risk infrastructure should be doing that assembly work upstream. Correlation should come before alerting, not after.

We bring identity, behavioural, and risk signals together earlier in the process, so teams see fewer cases, each one carrying the context needed to actually act on it. Not more dashboards. Not another round of threshold tuning. A different architecture that produces decisions instead of reconstruction.

If your analysts are spending more time figuring out what an alert means than deciding what to do about it, a larger team won't fix that. A better connected system will.

If this gap sounds familiar, it's worth a conversation. We work directly with fraud and risk teams to understand exactly where correlation is breaking down in their stack and what it's actually costing them.